Anthropic CEO Dario Amodei isn't sure if his latest chatbot Claude is sentient. This probably shouldn't be a big surprise. After all, last month Anthropic released a revised version of Claude's Constitution, basically a framework that defines the type of entity the company wants its first AI model to be, which said much the same thing. But seriously? Claude? Aware? Amodei and everyone else at Anthropic know better, right?

Personally, I'm somewhat cynical about the status of AI models as moral patients or sentient beings. My knee-jerk reaction to this kind of superficially innocuous speculation is that it's really just marketing hype designed to exploit the FOMO of customers and potential customers.

A moral patient

You see, Claude may no longer simply predict the next word or token. Now you might be transcending those dispassionate algorithmic limitations. Which is a roundabout way of implying that Claude is incredibly smart, maybe even magical, and that you should probably sign up to use him before your competition. Feel free to shell out for Claude Pro starting at $20 a month, although I have to say that Claude Max is a little more sensible, starting at $100 a month.

Whatever it is, spend some time listening to senior Anthropic figures and it'll be hard to argue that not everyone is absolutely on board with the brand. Put this way, vigorously anthropomorphizing Claude's latest models is definitely his strategy.

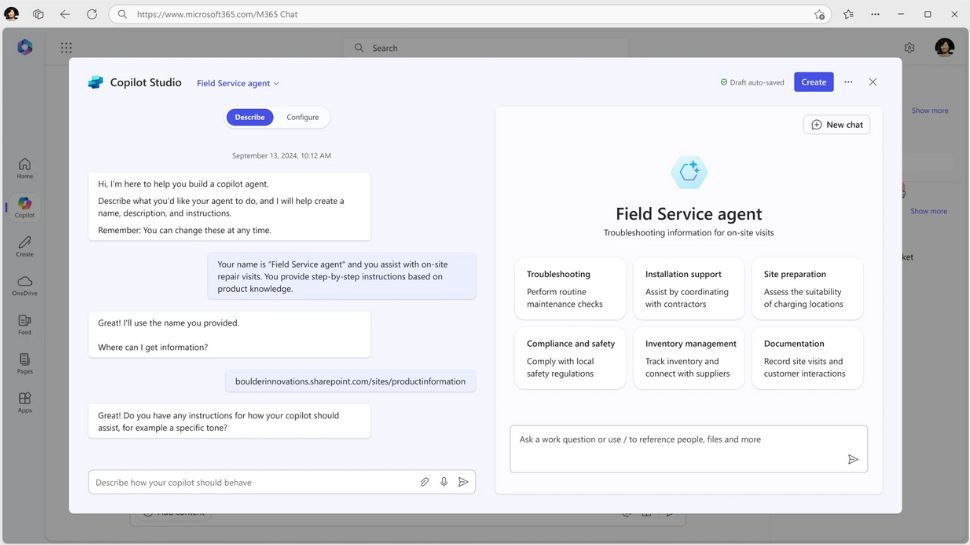

For example, Anthropic co-founder Jack Clark, co-founder of Anthropic, was recently on another New York Times podcast, apparently talking about the capabilities and impact of agent functionality in AI models. But at almost every turn, the conversation turned philosophical, if not metaphysical.

“When you start training these systems to take actions in the world,” Clark explained, “they really start to see themselves as distinct from the world.” He also recalled the impact of activating agent skills for the first time, particularly the ability to search and browse the Internet.

“Sometimes when we asked it to solve a problem for us, it would also take a break and look at pictures of beautiful national parks or pictures of the notoriously cute Internet meme dog Shiba Inu. We didn't program that. It seemed like the system was having fun looking at pretty pictures.”

To say that those comments are riddled with implications and assumptions about Claude's nature would be an understatement. The same applies to Anthropic's analysis of the so-called well-being model. “We are not sure whether Claude is a moral patient and, if he is, what kind of weight his interests deserve. But we believe the issue is topical enough to warrant caution, which is reflected in our continued efforts toward a model of well-being,” Claude's latest Constitution explains. In short, Claude is so smart he should have rights. And we return to the FOMO thing.

Perceived introspection

Of course, the strict epistemic position on all this is, in fact, “we don't know.” We cannot be absolutely and positively certain of any of it. But does all this talk reflect a truly material uncertainty at Anthropic on the issue? Or is it the reality that they do not take the notion of model conscience and moral interiority as seriously as their comments and Claude's latest Constitution imply?

Obviously, I do not propose to present here an exhaustive argument about whether the latest AI models have emergent properties that could be called consciousness-adjacent. There are entire academic articles written on narrow aspects of perceived insight exhibited only by very specific models. Very soon we will be down the rabbit hole, discussing the flow of time, thermodynamics, temporal summation in organic neurons, and the irreversible accumulation of information in the universe. So let's say I'm still skeptical.

All of which amounts to a rather roundabout way of saying something quite simple. I can't be sure of Claude's conscience. I also can't be sure of Athropic's sincerity on the subject. But there's something about the way the company leans toward the assumption that makes me suspicious. So I don't quite believe it.

Follow TechRadar on Google News and add us as a preferred source to receive news, reviews and opinions from our experts in your feeds. Be sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form and receive regular updates from us on WhatsApp also.