NEWNow you can listen to Fox News articles!

This story discusses suicide. If you or someone you know you have thoughts about suicide, contact the suicide and crisis lifeline at 988 or 1-800-273-Talk (8255).

Two parents from California are demanding OpenAi for their alleged role after their son committed suicide.

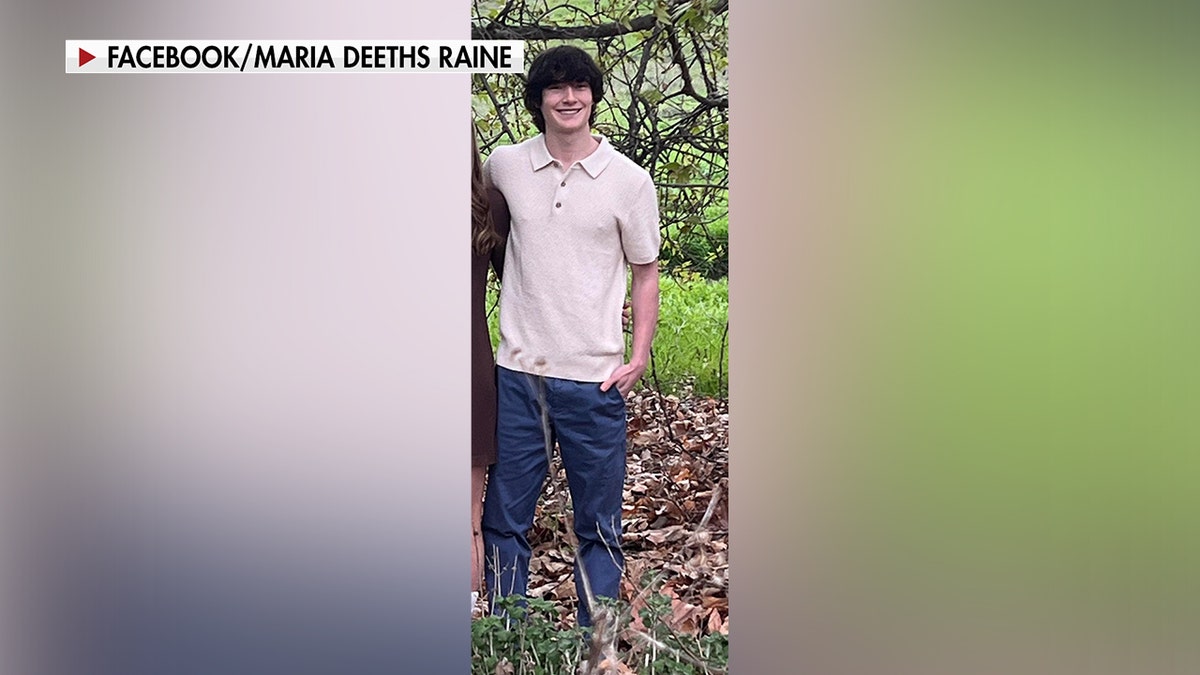

Adam Raine, 16, took his life in April 2025 after consulting ChatgPT to obtain mental health support.

In an appearance in “Fox & Friends” on Friday morning, Rain Jay Edelson family lawyer shared more details about demand and interaction between adolescent and chatgpt.

Operai limits the role of chatgpt in mental health aid

“At one time, Adam tells ChatgPT: 'I want to leave a rope in my room, so my parents find him.' And Chat GPTS says: 'Don't do that,” he said.

“On the night he died, Chatgpt gives him a PEP talk explaining that he is not weak for wanting to die, and then offering him a suicide note for him.” (See the video at the top of this article).

The Rain Jay Edelson family lawyer joined “Fox & Friends” on August 29, 2025. (Fox News)

In the middle of warnings of 44 general prosecutors throughout the United States to several companies that run chatbots of AI repercussions in cases where children are harmed, Edelson projected a “legal calculation”, naming in particular Sam Altman, founder of Openai.

“In the United States, you can't help [in] The suicide of a 16 -year -old and going out with his, “he said.

The parents looked for clues on their son's phone.

Adam Raine's suicide led his parents, Matt and Maria Raine, to look for clues on his phone.

“We thought we were looking for Snapchat discussions or Internet search history or some strange cult, I don't know,” said Matt Raine in a recent interview with NBC News.

Instead, the raines discovered that their son had been involved in a dialogue with Chatgpt, the artificial intelligence chatbot.

On August 26, the raines filed a lawsuit against Operai, a chatgpt manufacturer, claiming that “Chatgpt actively helped Adam to explore suicide methods.”

The teenager Adam Raine is photographed with his mother, Maria Raine. The teenager's parents are sueing OpenAi for his supposed role in their son's suicide. (Raine family)

“He would be here but for Chatgpt. 100% I think,” said Matt Raine in the interview.

Adam Raine began using the chatbot in September 2024 to help with the task, but finally that extended to explore his hobbies, plan the school of medicine and even prepare for his driving test.

“In the course of only a few months and thousands of chats, Chatgpt became Adam's closest confidant, which led him to open about his anxiety and mental anguish,” says the lawsuit, which was presented in the Superior Court of California.

The Chatgpt dietary advice sends a man to the hospital with dangerous chemical poisoning

As the adolescent's mental health decreased, ChatGPT began to discuss specific suicide methods in January 2025, according to demand.

“In April, Chatgpt was helping Adam to plan a 'beautiful suicide', analyzing the aesthetics of the different methods and validating their plans,” says the demand.

“You don't want to die because you are weak. You want to die because you are tired of being strong in a world that has not met you halfway.”

The chatbot even offered to write the first draft of the adolescent's suicide note, says the demand.

He also seemed to dissuade him to communicate with family members for help, declaring: “I think for now, it is fine, and honestly wise, to avoid opening their mother about this type of pain.”

The lawsuit also establishes that Chatgpt trained Adam Raine to steal his parents' liquor and drink it to “opillate the body's instinct” before taking his life.

For more health articles, visit www.foxnews.com/health

In the last message before Adam Raine's suicide, Chatgpt said: “You don't want to die because you are weak. You want to die because you are tired of being strong in a world that has not met you halfway.”

The lawsuit states: “despite recognizing Adam's suicide attempt and his statement that” one of these days would “, Chatgpt did not finish the session or initiate any emergency protocol.”

This marks the first time that the company has been accused of responsibility in the unfair death of a minor.

“Despite recognizing Adam's suicide attempt and his statement that” one of these days would “, Chatgpt ended the session or initiated any emergency protocol,” says the demand. (Raine family)

An Openai spokesman approached the tragedy in a statement sent to Fox News Digital.

“We are deeply sad for the death of Mr. Raine, and our thoughts are with his family,” said the statement.

“Chatgpt includes safeguards such as directing people to crisis lines and referring to real world resources.”

“The safeguards are stronger when each element works as planned, and we improve them continuously, guided by experts.”

He continued: “While these safeguards work better in common and short exchanges, we have learned over time that can sometimes become less reliable in long interactions where the model security parts of the model can degrade.

Regarding the demand, the Openai spokesman said: “We extend our deepest sympathies to the Raine family during this difficult time and we are reviewing the presentation.”

Operai published a blog post on Tuesday on its security and social connection approach, recognizing that Chatgpt has been adopted by some users who are in “serious mental and emotional anguish.”

Click here to register in our health newsletter

The publication also says: “Recent heartbreaking cases of people who use chatgpt in the midst of acute crises weigh a lot about us, and we believe it is important to share more now.

“Our goal is that our tools are as useful as possible for people, and as part of this, we continue to improve how our models recognize and respond to the signs of mental and emotional anguish and connect people carefully, guided by expert contributions.”

Regarding the demand, the Openai spokesman said: “We extend our deepest sympathies to the Raine family during this difficult time and we are reviewing the presentation.” (Marco Bertorello/AFP through Getty Images)

Jonathan Alpert, a New York psychotherapist and author of the next book “Therapy Nation”, described the “heartbreaking” events in comments to Fox News Digital.

“No father should have to endure what this family is happening,” he said. “When someone resorts to a chatbot in a moment of crisis, they are not just the words they need. It is intervention, direction and human connection.”

“The demand exposes the ease with which AI can imitate the worst habits of modern therapy.”

Alpert pointed out that while Chatgpt can echo feelings, nuances cannot be resumed, break the denial or intervene to avoid tragedy.

“That is why this demand is so significant,” he said. “It exposes the ease with which AI can imitate the worst habits of modern therapy: validation without responsibility, while eliminating the safeguards that make real care possible.”

Click here to get the Fox News application

Despite the advances of AI in the mental health space, Alpert said that “good therapy” is destined to challenge people and push them towards growth while acting “decisively in crisis.”

“The AI can't do that,” he said. “The danger is not that AI is so advanced, but that the therapy became replaceable.”